This article was discussed on Hacker News.

A few years ago I set out on a personal journey to study and watch a

performance of each of Shakespeare’s 37 plays. I’ve reached my goal and,

though it’s not a usual topic around here, I wanted to get my thoughts

down while fresh. I absolutely loved some of these plays and performances,

and so I’d like to highlight them, especially because my favorites are,

with one exception, not “popular” plays. Per tradition, I begin with my

least enjoyed plays and work my way up. All performances were either a

recording of a live stage or an adaptation, so they’re also available to

you if you’re interested, though in most cases not for free. I’ll mention

notable performances when applicable. The availability of a great

performance certainly influenced my play rankings.

Like many of you, I had assigned reading for several Shakespeare plays in

high school. I loathed these assignments. I wasn’t interested at the time,

nor was I mature enough to appreciate the writing. Even revisiting as an

adult, the conventional selection — Romeo and Juliet, Julius Caesar,

etc. — are not highly ranked on my list. For the next couple of decades I

thought that Shakespeare just wasn’t for me.

Then I watched the 1993 adaption of Much Ado About Nothing and it

instantly became one of my favorite films. Why didn’t we read this in

high school?! Reading the play with footnotes helped to follow the

humor and allusions. Even with the film’s abridging, some of it still went

over my head. I soon discovered Asimov’s Guide to Shakespeare — yes,

that Asimov — which was exactly what I needed, and a perfect companion

while reading and watching the plays. If stumbling upon this turned out so

well, then I’d better keep going.

Wanting a solid set of the plays with good footnotes and editing — there

is no canonical version of the plays — I picked up a copy of The Norton

Shakespeare. Unfortunately it’s part of the college textbook racket, and

it shows. The collection is designed to be sold to students who will lug

them in bookbags, will typically open them face-up on a desk, and are

uninterested in their contents beyond class. It includes a short-term,

digital-only, DRMed component to prevent resale. After all, their target

audience will not read it again anyway. Though at least it’s complete and

compact, better for reference than reading.

In contrast, the Folger Shakespeare Library mass market paperbacks are

better for enthusiasts, both in form and format. They’re clearly built for

casual, comfortable reading. However, they’re not sold as a complete set,

and gathering used copies takes some work.

Also essential was BBC Television Shakespeare, produced between

1978 and 1985. Finding productions of the more obscure plays is tricky,

but it always provided a fallback. In some cases these were the best

performances anyway! When I mention “the BBC production” I mean this

series. Like many collections, they omit The Two Noble Kinsmen due to

unclear authorship, and for this reason I’m omitting it from my list as

well. As with any faithful production, I suggest subtitles on the first

viewing, as it aids with understanding. Shakespeare’s sentence structure

is sometimes difficult to parse by moderns, and on-screen text helps. (By

the way, a couple of handy SHA-1 sums for those who know how to use them:)

0ae909e5444c17183570407bd09a622d2827751e

55c77ed7afb8d377c9626527cc762bda7f3e1d83

As my list will show, my favorites are comedic comedies and histories,

particularly the two Henriads, each a group of four plays. The first —

Richard II, 1 Henry IV, 2 Henry IV, and Henry V — concerns events

around Henry V, in the late 14th and early 15th century. Those number

prefixes are parts, as in Henry IV has two parts. In my list I combine

parts as though a single play. The second — 1 Henry VI, 2 Henry VI, 3

Henry VI, Richard III — is about the Wars of the Roses, spanning the

15th century. Asimov’s book was essential for filling in the substantial

historical background for these plays, and my journey was also in part a

history study.

I especially enjoy villain monologues, and plays with them rank higher as

a result. It’s said that everyone is the hero of their own story, but

Shakespeare’s villains may know that they’re villains and revel it in it,

bragging directly to the audience about all the trouble they’re going to

cause. In some cases they mock the audience’s sacred values, which in a

way, is like the stand up comedy of Shakespeare’s time. Notable examples

are Edmund (King Lear), Aaron (Titus Andronicus), Richard III, Iago

(Othello), and Shylock (The Merchant of Venice).

As with literature even today, authors are not experts in moral reasoning

and protagonists are often, on reflection, incredibly evil. Shakespeare is

no different, especially for historical events and people, praising those

who create mass misery (e.g. tyrants waging wars) and vilifying those who

improve everyone’s lives (e.g. anyone who deals with money). Up to and

including Shakespeare’s time, a pre-industrial army on the march was a

rolling humanitarian crisis, even in “friendly” territory,

slaughtering and stealing its way through the country in order to keep

going. So, much like suspension of belief, there’s a suspension of

morality where I engage with the material on its own moral terms, however

illogical it may be.

Now finally my list. The beginning will be short and negative because, to

be frank, I disliked some of the plays. Even Shakespeare had to work under

constraints. In his time none were regarded as great works. They weren’t

even viewed as literature, but similarly to how we consider television

scripts today. Also, around 20% of plays credited to Shakespeare were

collaborations of some degree, though the collaboration details have been

long lost. For simplicity, I will just refer to the author as Shakespeare.

(37) Timon of Athens

I have nothing positive to say about this play. It’s about a man who

borrows and spends recklessly, then learns all the wrong lessons from the

predictable results.

(36) The Two Gentlemen of Verona

Involves a couple of love triangles, a woman disguised as a man — a common

Shakespeare trope — and perhaps the worst ending to a play ever written.

The two “gentlemen” are terrible people and undeserving of their happy

ending. Though I enjoyed the scenes with Proteus and Crab, the play’s fool

and his dog.

(35) Troilus and Cressida

Interesting that it’s set during the Iliad and features legendary

characters such as Achilles, Ajax, and Hector. I have no other positives

to note. Cressida’s abrupt change of character in the Greek camp later in

the play is baffling, as though part of the play has been lost, and ruins

an already dull play for me.

(34) The Winter’s Tale

A baby princess is lost, presumed dead, and raised by shepherds. She is

later rediscovered by her father as a young adult. It has a promising

start, but in the final act the main plot is hastily resolved off-stage

and seemingly replaced with a hastily rewritten ending that nonsensically

resolves a secondary story line.

(33) Cymbeline

The title refers to a legendary early King of Britain and is set in the

first century, but it is primarily about his daughter. The plot is

complicated so I won’t summarize it here. It’s long and I just didn’t

enjoy it. This is the second play in the list to feature a woman disguised

as a man.

(32) The Tempest

A political exile stranded on an island in the Mediterranean gains magical

powers through study, with the help of a spirit creates a tempest that

strands his enemies on his island, then gently torments them until he’s

satisfied that he’s had his revenge. It’s an okay play.

More interesting is the historical context behind the play. It’s based

loosely on events around the founding of Jamestown, Virginia. Until this

play, Shakespeare and Jamestown were, in my mind, unrelated historical

events. In fact, Pocahontas very nearly met Shakespeare, missing him by

just a couple of years, but she did meet his rival, Ben Jonson. I spent

far more time catching up on real history, including reading the

fascinating True Reportory, than I did on the play.

(31) The Taming of the Shrew

About a man courting and “taming” an ill-tempered woman, the shrew. The

seeming moral of the play was outdated even in Shakespeare’s time, and

it’s unclear what was intended. Technically it’s a play within a play, and

an outer frame presents the play as part of an elaborate prank. However,

the outer frame is dropped and never revisited, indicating that perhaps

this part of the play was lost. The BBC production skips this framing

entirely and plays it straight.

(30) All’s Well That Ends Well

Helena, a low-born enterprising young woman, saves a king’s life. She’s in

love with a nobleman, Bertram, and the king orders him to marry her as

repayment. He spurns her solely due to her low upbringing and flees the

country. She gives chase, and eventually wins him over. Helena is a great

character, and Bertram is utterly undeserving of her, which ruins the play

for me in an unearned ending.

(29) Antony and Cleopatra

A tragedy about people who we know for sure existed, the first such on the

list so far. The sequel to Julius Caesar, completing the story of the

Second Triumvirate. Historically interesting, but the title characters

were terrible, selfish people, including in the play, and they aren’t

interesting enough to make up for it.

I enjoyed the portrayal of Octavian as a shrewd politician.

(28) Julius Caesar

A classic school reading assignment. Caesar’s death in front of the Statue

of Pompey is obviously poetic, and so every performance loves playing it

up. Antony’s speech is my favorite part of the play. I didn’t dislike this

play, but nor did I find it interesting revisiting it as an adult.

(27) Coriolanus

About the career of a legendary Roman general and war hero who attempts to

enter politics. He despises the plebeians, which gets him into trouble,

but all he really wants is to please is mother. Stratford Festival has a

worthy adaption in a contemporary setting.

(26) Henry VIII

He reigned from 1509 to 1547, but the play only covers Henry VIII’s first

divorce. It paved the way for the English Reformation, though the play has

surprisingly little to say it, or his murder spree. It’s set a few decades

after the events of Richard III — too distant to truly connect with the

second Henriad.

While I appreciate its historical context — with liberal dramatic license

— it’s my least favorite of the English histories. It’s not part of an

epic tetralogy, and the subject matter is mundane. My favorite scene is

Katherine (Catherine in the history books) firmly rejecting the court’s

jurisdiction and walking out. My favorite line: “No man’s pie is freed

from his ambitious finger.”

(25) Romeo and Juliet

Another classic reading assignment that requires no description. A

beautiful play, but I just don’t connect with its romantic core.

(24) The Merchant of Venice

An infamously antisemitic play where a Jewish moneylender, Shylock, loans

to the titular merchant of Venice where the collateral is the original

“pound of flesh,” providing the source for that cliche. Though even in his

prejudice, Shakespeare can’t help but write multifaceted characters,

particularly with Shylock’s famous “If you prick us, do we not bleed?”

speech.

(23) Twelfth Night

Twins, a young man and a woman, are separated by a shipwreck. The woman

disguises herself as a man and takes employment with a local duke and

falls in love with him, but her employment requires her to carry love

letters to the duke’s love interest. In the meantime the brother arrives,

unaware his sister is in town in disguise, and everyone gets the twins

mixed up leading to comedy. It’s a fun play. The title has nothing to do

with the play, but refers to the holiday when the play was first

performed.

The play is the source of the famous quote, “Some are born great, some

achieve greatness, and some have greatness thrust upon them.” It’s used as

part of a joke, and when I heard it, I had thought the play was mocking

some original source.

(22) Pericles

A Greek play about a royal family — father, mother, daughter — separated

by unfortunate — if contrived — circumstances, each thinking the others

dead, but all tearfully reunited in a happy ending. My favorite part is

the daughter, Marina, talking her way out of trouble: “She’s able to

freeze the god Priapus and undo a whole generation.”

The BBC production stirred me, particularly the scene where Pericles and

Marina are reunited.

(21) Richard II

Richard II, grandson of the famed Edward III, was a young King of England

from 1367 to 1400. At least in the play, he carelessly makes dangerous

enemies of his friends, and so is deposed by Henry Bolingbroke, who goes

on to become Henry IV. The play is primarily about this abrupt transition

of power, and it is the first play of the first Henriad. The conflict in

this play creates tensions that will not be resolved until 1485, the end

of the Wars of the Roses. Shakespeare spends seven additional plays on

this a huge, interesting subject.

For me, Richard II is the most dull of the Henriad plays. It’s a slow

start, but establishes the groundwork for the greater plays that follow.

The BBC production of the first Henriad has “linked” casting where the

same actors play the same roles through the four plays, which makes this

an even more important watch.

(20) Othello

Another of the famous tragedy. Othello, an important Venetian general, and

“the Moore of Venice” is dispatched to Venice-controlled Cyprus to defend

against an attack by the Ottoman Turks. Iago, who has been overlooked for

promotion by Othello, treacherously seeks revenge, secretly sabotaging all

involved while they call him “honest Iago.” Though his schemes quickly go

well beyond revenge, and continues sowing chaos just for his own fun.

I watched a few adaptions, and I most enjoyed the 2015 Royal Shakespeare

Company Othello, which

places it in a modern setting and requires few changes to do so.

(19) The Comedy of Errors

A fun, short play about a highly contrived situation: Two pairs of twins,

where each pair of brothers has been given the same name, is separated at

birth. As adults they all end up in the same town, and everyone mixes them

up leading to comedy. It’s the lightest of Shakespeare’s plays, but also

lacks depth.

(18) Hamlet

Another common, more senior, high school reading assignment. Shakespeare’s

longest play, and probably the most subtle. In everything spoken between

Hamlet and his murderous uncle, Claudius, one must read between the lines.

Their real meanings are obscured by courtly language — familiar to

Shakespeare’s audience, but not moderns. Asimov is great for understanding

the political maneuvering, which is a lot like a game of chess. It made me

appreciate the play more than I would have otherwise.

You’d be hard-pressed to find something that beats the faithful,

star-studded 1996 major film adaption.

(17) Richard III

The final play of the second Henriad. Much of the play is Richard III

winking at the audience, monologuing about his villainous plans, then

executing those plans without remorse. Makes cheering for the bad guy fun.

If you want to see an evil schemer get away with it, at least right up

until the end when he gets his comeuppance, this is the play for you. This

play is the source of the famous “My kingdom for a horse.”

I liked two different performances for different reasons. The 1995 major

film puts the play in the World Word II era. It’s solid and does

well standing alone. The BBC production has linked casting with the three

parts of Henry VI, which allows one to enjoy it in full in its broader

context. It’s also well-performed, but obviously has less spectacle and a

lower budget.

(16) The Merry Wives of Windsor

The comedy spin-off of Henry IV. Allegedly, Elizabeth I liked the

character of John Falstaff from Henry IV so much — I can’t blame her! —

that she demanded another play with the character, and so Shakespeare

wrote this play. The play brings over several characters from Henry IV.

Unfortunately it’s in name only and they hardly behave like the same

characters. Despite this, it’s still fun and does not require knowledge of

Henry IV.

Falstaff ineptly attempts to seduce two married women, the titular wives,

who play along in order to get revenge on him. However, their husbands are

not in on the prank. One suspects infidelity and hatches his own plans.

The confusion leads to the comedy.

The 2018 Royal Shakespeare Company production aptly puts it in

a modern suburban setting.

(15) Titus Andronicus

A play about a legendary Roman general committed to duty above all else,

even the lives of his own sons. He and his family become brutal victims of

political rivals, and in return gets his own brutal revenge. It’s by far

Shakespeare’s most violent and disturbing play. It’s a bit too violent

even for me, but it ranks this highly because Aaron the Moore is such a

fantastic character, another villain that loves winking at the audience.

His lines throughout the play make me smile: “If one good deed in all my

life I did, I do repent it from my very soul.”

I enjoyed the 1999 major film, which puts it in a contemporary

setting.

(14) King Lear

The titular, mythological king of pre-Roman Britain wants to retire, and

so he divides his kingdom between his three daughters. However, after

petty selfishness on Lear’s part, he disowns the most deserving daughter,

while the other two scheme against one another.

Some of the scenes in this play are my favorite among Shakespeare, such as

Edmund’s monologue on bastards where he criticizes the status quo and

mocks the audience’s beliefs. It also has one of the best fools, who while

playing dumb, is both observant and wise. That’s most of Shakespeare’s

fools, but it’s especially true in King Lear (“This is not altogether

fool, my lord.”). This fool uses this “tenure” to openly mock the king to

his face, the only character that can do so without repercussions.

My favorite performance was the 2015 Stratford Festival stage

production, especially for its Edmund, Lear, and Fool.

(13) Macbeth

The shortest tragedy, a common reading assignment, and a perfect example

of literature I could not appreciate without more maturity. Even the plays

I dislike have beautiful poetry, but I especially love it in Macbeth.

The history behind Macbeth is itself fascinating. The play was written

custom for the newly-crowned King James I — of King James Version fame —

and even calls him out in the audience. James I was obsessed with witch

hunts, so the play includes witchcraft. The character Banquo was by

tradition considered to be his ancestor.

My favorite production by far — I watched a number of them! — was the

2021 film. It should be an approachable introduction for Shakespeare

newcomers more interested in drama than comedy. Notably for me, it departs

from typical productions in that Macbeth and Lady Macbeth do not scream at

each other — perhaps normally a side effect of speaking loudly for stage

performance. Particularly in Act 1, Scene 7 (“screw your courage to the

sticking place”). In the film they argue calmly, like a couple in a

genuine, healthy relationship, making the tragedy that much more tragic.

That being said, it drops the ball with the porter scene — a bit of comic

relief just after Macbeth murders Duncan. There’s knocking at the gate,

and the porter, charged with attending it, is hungover and takes his time.

In a monologue he imagines himself porter to Hell, and on each impatient

knock considers the different souls he would be greeting. Of all the

porter scenes I watched, the best porter as the 2017 Stratford Festival

production, where he is both charismatic and hilarious. I wish I

could share a clip.

(12) King John

King John, brother of “Coeur de Lion” Richard I, ruled in early 13th

century. His reign led to the Magna Carta, and he’s also the Prince John

of the Robin Hood legend, though because it’s a history, and paints John

in a positive light, that legend isn’t included. It depicts fascinating,

real historical events and people, including Eleanor of Aquitaine.

It also has one of my favorite Shakespeare characters, Phillip the

Bastard, who gets all the coolest lines. I especially love his

introductory scene where his lineage is disputed by his half-bother and

Eleanor, impressed, essentially adopts him on the spot.

The 2015 Stratford Festival stage performance is wonderful, and

I’ve re-watched it a few times. The performances are all great.

(11–9) Henry VI

As previously noted, this is actually three plays. At 3–4 hours apiece,

it’s about the length of a modern television season. I thought it might

take awhile to consume, but I was completely sucked in, watching and

studying the whole trilogy in a single weekend.

Henry V died young in 1422, and his infant son became Henry VI, leaving

England ruled by his uncles. As an adult he was a weak king, which allowed

the conflicts of the previously-mentioned Richard II to bubble up into

the Wars of the Roses, a bloody power conflict between the Lancasters and

Yorks. The play features historical people including Joan la Pucelle

(“Joan of Arc”), English war hero John Talbot, and Jack Cade.

Richard III wraps up the conflicts of Henry VI, forming the second

Henriad. When watching/reading the play, keep in mind that the play is

anti-French, anti-York, and (implicitly) pro-Tudor.

Most of the first part was probably not written by Shakespeare, but rather

adapted from an existing play to fill out the backstory. I think I can see

the “seams” between the original and the edits that introduce the roses.

I loved the BBC production of the second Henriad. Producing such an epic

story must be daunting, and it’s amazing what they could convey with such

limited budget and means. It has hilarious and clever cinematography for

the scene where the Countess of Auvergne attempts to trap Talbot (Part 1,

Act 2, Scene 3). Again, I wish I could share a clip!

(8) Henry V

Due to his amazing victories, most notably at Agincourt where, for

once, Shakespeare isn’t exaggerating the odds, Henry V is one of the great

kings of English history. This play is a followup to Richard II and

Henry IV, completing the first Henriad, and depicts Henry V’s war with

France. Outside of the classroom, this is one of Shakespeare’s most

popular plays.

The obvious choice for viewing is the 1989 major film, which, by

borrowing a few scenes from Henry IV, attempts a standalone experience,

though with limited success. I watched it before Henry IV, and I could

not understand why the film was so sentimental about a character that

hadn’t even appeared yet. It probably has the best Saint Crispin’s Day

Speech ever performed, in part because it’s placed in a broader

context than originally intended. The introduction is bold as is

Exeter’s ultimatum delivery. It cleverly, and without changing his

lines, also depicts Montjoy, the French messenger, as sympathetic to the

English, also not originally intended. I didn’t realize this until I

watched other productions.

The BBC production is also worthy, in large part because of its linked

casting with Richard II and Henry IV. It’s also unabridged, including

the whole glove thing, for better or worse.

(7–6) Henry IV

People will think I’m crazy, but yes, I’m placing Henry IV above Henry

V. My reason is just two words: John Falstaff. This character is one of

Shakespeare’s greatest creations, and really makes these plays for me. As

previously noted, this is two plays mainly because John Falstaff was such

a huge hit. The sequel mostly retreads the same ground, but that’s fine!

I’ve read and re-read all the Falstaff scenes because they’re so fun. I

now have a habit of quoting Falstaff, and it drives my wife nuts.

The Falstaff role makes or breaks a Henry IV production, and my love for

this play is in large part thanks to the phenomenal BBC production. It has

a warm, charismatic Falstaff that perfectly nails the role. It’s

great even beyond Falstaff, of course. At the end of part 2, I tear up

seeing Henry V test the chief justice. I adore this production. What a

masterpiece.

(5) A Midsummer Night’s Dream

A popular, fun, frivolous play that I enjoyed even more than I expected,

where faeries interfere with Athenians who wander into their forest. The

“rude mechanicals” are charming, especially the naive earnestness of Nick

Bottom, making them my favorite part of the play.

My enjoyment is largely thanks to a 2014 stage production with

great performances all around, great cinematography, and incredible

effects. Highly recommended. Honorable mention goes to the great Nick

Bottom performances of the BBC production and the 1999 major film.

(4) As You Like It

A pastoral comedy about idyllic rural life, and the source of the famous

quote “All the world’s a stage.” A duke has deposed his duke brother,

exiling him and his followers to the forest where the rest of the play

takes place. The main character, Rosalind, is one of the exiles, and,

disguised as a man named Ganymede, flees into the forest with her cousin.

There she runs into her also-exiled love interest, Orlando. While still

disguised as Ganymede, she roleplays as Rosalind — that is, herself — to

help him practice wooing herself. Crazy and fun.

A couple of my favorite lines are “There’s no clock in the forest” and

“falser than vows made in wine.” It’s an unusually musical play, and has a

big, happy ending. The fool, Touchstone, is one of my favorite fools,

named such because he tests the character of everyone with whom he comes

in contact.

It ranks so highly because of an endearing 2019 production by Kentucky

Shakespeare, which sets the story in a 19th century Kentucky. This is

the most amateur production I’ve shared so far — literally Shakespeare in

the park — but it’s just so enjoyable. Their Rosalind is fantastic and

really makes the play work. I’ve listened to just the audio of the play,

like a podcast, many times now.

(3) Measure for Measure

A comedy about justice and mercy. The duke of Vienna announces he will be

away on a trip to Poland, but secretly poses as a monk in order to get his

thumb on the pulse of his city. Unfortunately the man running the city in

his stead is corrupt, and the softhearted duke can’t help but pull strings

behind the scenes to undo the damage, and more. He sets up a scheme such

that, after his dramatic return as duke, the plot is unraveled while

simultaneously testing the character of all involved.

I love so many of the characters and elements of this play. I smile when

the duke jumps into action, my heart wrenches at Isabella’s impassioned

speech for mercy (“it is excellent to have a giant’s strength,

but it is tyrannous to use it like a giant”), I admire the provost’s

selfless loyalty to the duke, I laugh when Lucio the “fantastic” keeps

putting his foot in his mouth, and I cry when Mariana begs Isabella to

forgive. All around a wonderful play.

Like so many already, a big part of my love for the play is the BBC

production, which is full of great performances, particularly

the duke, Isabella, and Lucio.

(2) Much Ado About Nothing

As the play that finally got me interested in Shakespeare, of course it’s

near the top of the list. Forget Romeo and Juliet: Benedick and Beatrice

are Shakespeare’s greatest romantic pairing!

Don Pedro, Prince of Aragon, stops in Messina with his soldiers while

returning from a military action. While in town there’s a matchmaking plot

and lots of eavesdropping, and then chaos created by the wicked Don John,

brother to Don Pedro. It’s a fun, light, hilarious play. It also features

another of Shakespeare’s great comic characters, Dogberry, famous for his

malapropisms.

This is a very popular play with tons of productions, though I only

watched a few of them. The previously-mentioned 1993 adaption remains my

favorite. It does some abridging, but honestly, it makes the play better

and improves the comedic beats.

(1) Love’s Labour’s Lost

Finally, my favorite play of all, and an unusual one to be at the top of

the list. Much of the play is subtle parody and so makes for a poor first

play for newcomers, who would not be familiar enough with Shakespeare’s

language to distinguish parody from genuine.

The King of Navarre and three lords swear an oath to seclude themselves

in study, swearing off the company of women. Then the French princess and

her court arrives, the four men secretly write love letters in violation

of their oaths, and comedy ensues. There are also various eccentric side

characters mixed into the plot to spice it up. It’s all a ton of fun and

ends with an inept play within a play about the “nine worthies.”

The major reason I love this play so much is a literally perfect 2017

production by Stratford Festival. I love every aspect of this

production such that I can’t even pick a favorite element. I was hooked

within the first minute.

]]>

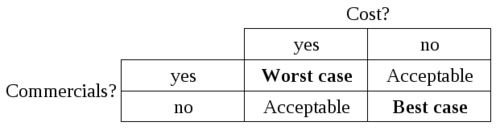

There has been a lot of talk online about the fragility of URL

shortening services, particularly in relation to Twitter and its 140

character limit on posts (based on SMS limits). These services create

a single point of failure and break mechanisms of the web that we rely

on. Several solutions have been proposed, so over the next couple

years we get to see which ones end up getting adopted.

There has been a lot of talk online about the fragility of URL

shortening services, particularly in relation to Twitter and its 140

character limit on posts (based on SMS limits). These services create

a single point of failure and break mechanisms of the web that we rely

on. Several solutions have been proposed, so over the next couple

years we get to see which ones end up getting adopted.

In a

In a